Shifting Video Viewing Behavior Is Forcing Publishers To Revamp Their Cross-Device Programming Strategy

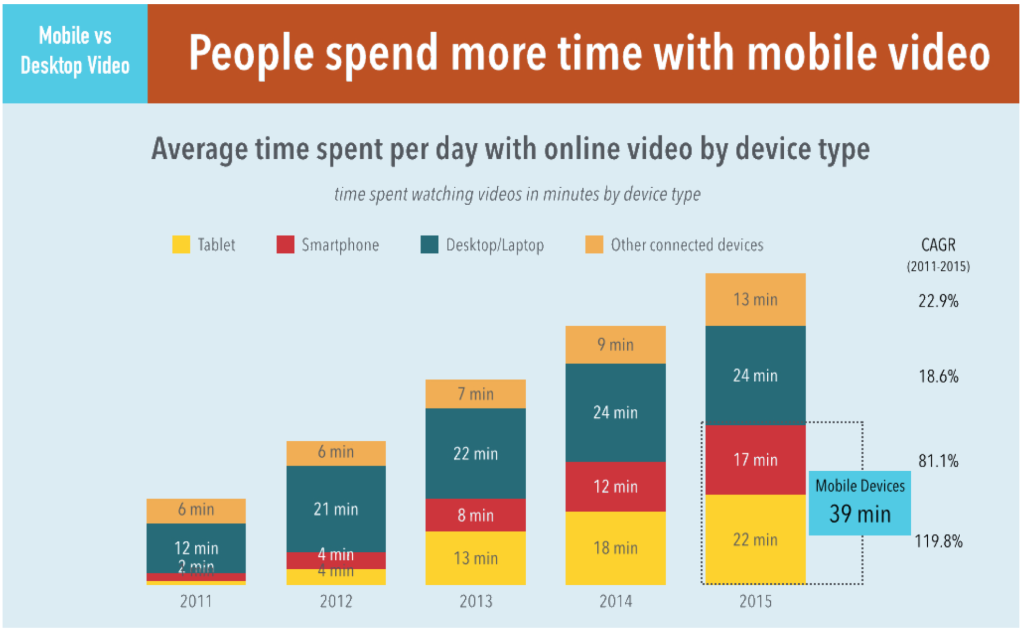

Data from Adobe has shown that tablet and smartphone viewing accounted for nearly 40 minutes of daily viewing in 2015. This growth has not come at the expense of desktop or connected devices as mobile will continue to be a major story in 2016 as it drives overall growth in video consumption. While this is good news overall, it does present a number of new challenges that will face publishers in 2016.

What this data shows is that video viewers are increasingly accessing content through multiple entry-points throughout the day. These entry points, by nature of technology and context have unique user experiences. What works on desktop, can be intrusive, clunky, and bandwidth hogging on mobile. Those 37 minutes of desktop and connected device viewing are more continuous than the hop on / hop off viewing habits of mobile.

What this data shows is that video viewers are increasingly accessing content through multiple entry-points throughout the day. These entry points, by nature of technology and context have unique user experiences. What works on desktop, can be intrusive, clunky, and bandwidth hogging on mobile. Those 37 minutes of desktop and connected device viewing are more continuous than the hop on / hop off viewing habits of mobile.

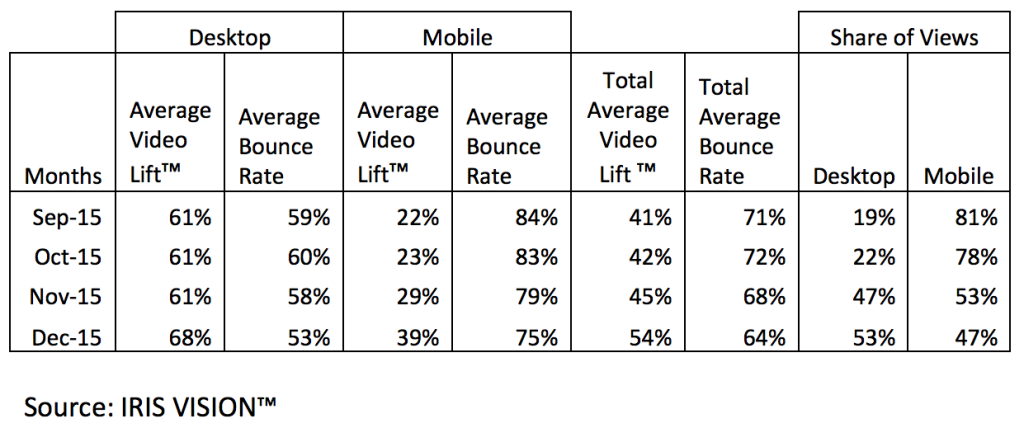

Anonymous data from a publisher that began with a mobile to desktop split of 81/19 in September 2015 and moved to close to 50/50 by year-end by increasing overall desktop views.

Anonymous data from a publisher that began with a mobile to desktop split of 81/19 in September 2015 and moved to close to 50/50 by year-end by increasing overall desktop views.

Iris.TV shared with me some data from one of their customers that publishes to both web and mobile environments and saw desktop views increase to a level where by month four, shares of views was split evenly on desktop and mobile. There was an increase in Video Lift and reduction in bounce rate across the board as well. Iris.TV measures the average video engagement in the viewing session as Video Lift, which is the measure of recommended video views divided by the initial clicked views. Bounce rate is the measure of viewers exiting the viewing experience prior to completion of the initial clicked video. Mobile bounce rates began at 84% but over time reduced to 75%. Video Lift increased from 22% to 39%.

By better engaging the user in mobile, publishers can organically drive them to desktop where there is higher video adoption and lower bounce which translates into more videos completed per session and more ads served. The data shows that publishers can grow overall value by programming content across devices to engage viewers. User engagement is a critical driver of adoption and retention across platforms, but especially on mobile. For the most part, programming a consistent user experience across devices has been the domain of the TVE/SVOD/OTT offerings from Netflix, Amazon, and the like. Viewers of films and serialized TV are able to pick up where they left off across devices with authentication via logins. YouTube and a few other mobile apps have been able to offer cross devices experiences, but they are few and far between.

The portion of the market that should be innovating around cross-device programming are publishers of short-form ad-supported video. Upwards of 70% of short-form videos are directed from social media, followed by audience development and organic traffic. Social is a mobile medium with Facebook leading the way. Publishers need to manage the mobile social video experience to drive engagement but not become too dependent on these platforms for video. By not programming better on their O&O mobile web, they are leaving money on the table and missing opportunities to increase user engagement and retention across all entry-points.

So what can publishers do? Budgets are tight and not everyone can invest in video-centric apps. But publishers can and should be utilizing data-driven business intelligence to determine what videos perform well and in what context. The focus should be on video performance with respect to content category, device, content length, social engagement, and time-of-day, which would improve the user experience.