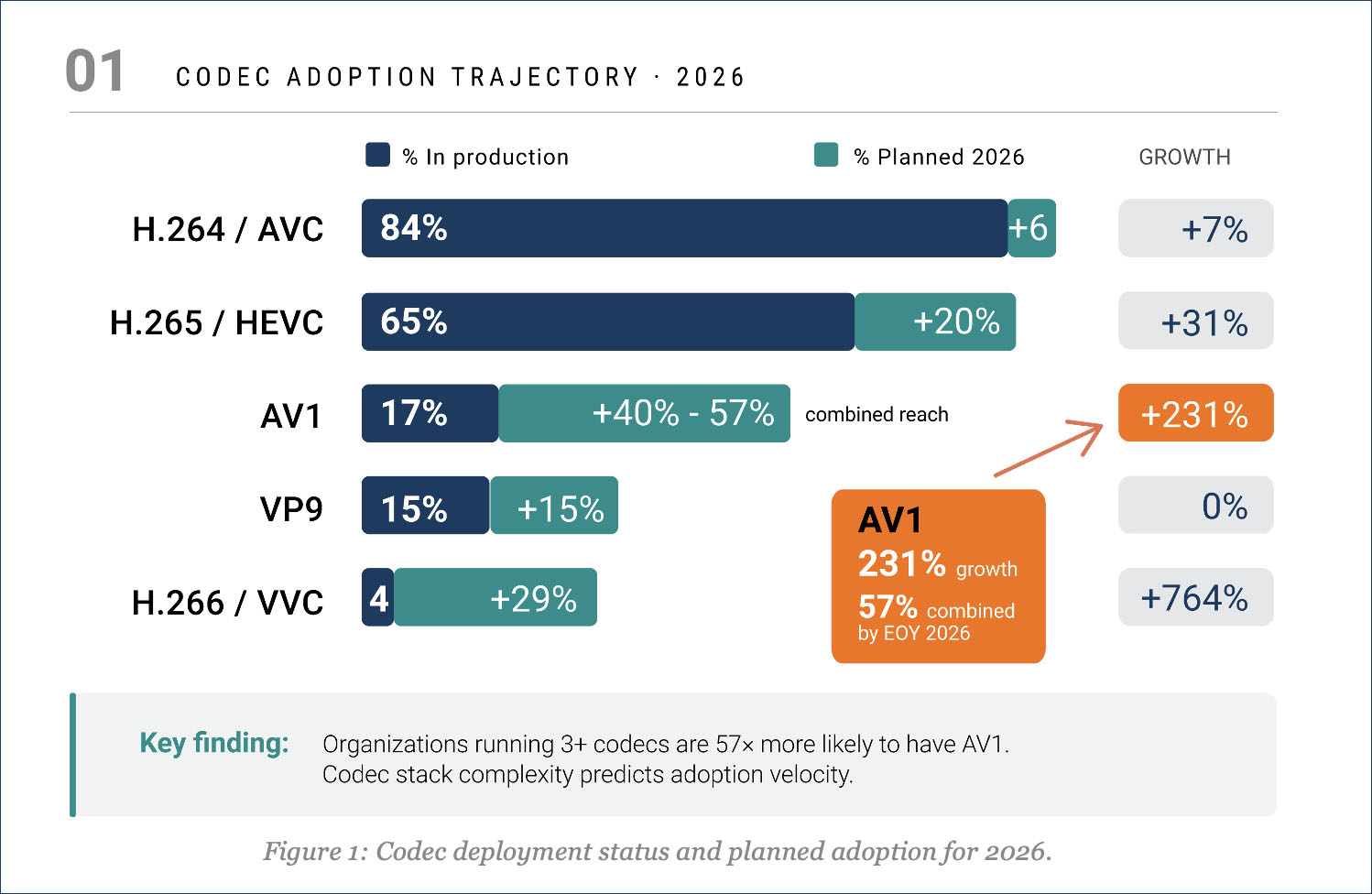

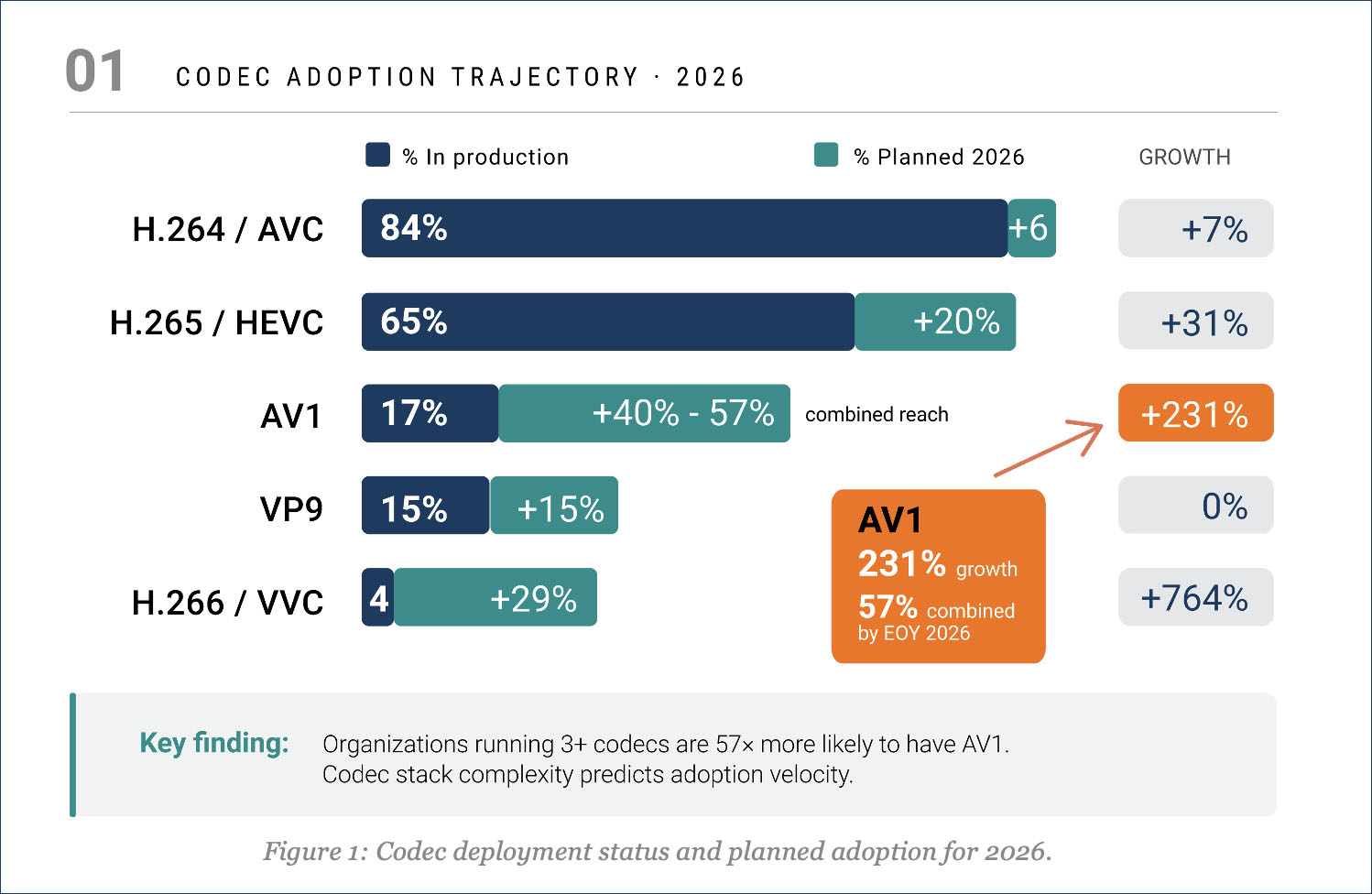

New data from NETINT’s state of video encoding survey shows H.264/AVC sits at 84% production deployment, making the standard effectively universal and plateaued. HEVC is at 65% in production with another 20% planning deployment, putting it on a path toward H.264-level ubiquity. But the breakout number belongs to AV1. At 17% current production deployment, AV1 might not look like much on its own. But 40% of respondents plan to deploy AV1 in 2026, giving it a combined reach of 57% by year-end. That is not early-adopter experimentation. That is mainstream planning.

The barriers to AV1 adoption are worth noting because they are not primarily about the codec itself. Hardware decode support leads at 54%, followed by toolchain limitations at 43% and encoding compute costs at 37%. The licensing story is essentially a non-issue for AV1, with less than 1% cited it as a barrier. This stands in sharp contrast to VVC, where 44% flagged licensing and royalties as a concern. That licensing gap alone may determine which next-gen codec is deployed first.

The barriers to AV1 adoption are worth noting because they are not primarily about the codec itself. Hardware decode support leads at 54%, followed by toolchain limitations at 43% and encoding compute costs at 37%. The licensing story is essentially a non-issue for AV1, with less than 1% cited it as a barrier. This stands in sharp contrast to VVC, where 44% flagged licensing and royalties as a concern. That licensing gap alone may determine which next-gen codec is deployed first.

One additional data point I found interesting: organizations running three or more codecs in production today are 57 times more likely to have AV1 in their stack compared to single-codec operators. Current codec stack complexity is the strongest predictor of future adoption velocity. That pattern makes intuitive sense. Teams with multi-codec experience already have the tooling, testing workflows, and operational maturity to add another format without starting from scratch.

VP9 offers a cautionary data point here. The data shows it sits at 15% production deployment with respondents, with only 15% planning further adoption, and 70% reporting no plans at all. VVC generates interest in evaluation, with 29% planning to adopt it, yet the successor to HEVC remains at just 4% in production deployment. It’s clear that VVC is facing both licensing headwinds and toolchain immaturity, unlike AV1. The report describes a clear codec progression ladder: organizations tend to climb from H.264 through HEVC and VP9 to AV1, meaning the current stack is a better predictor of next-gen adoption than company size or industry vertical.

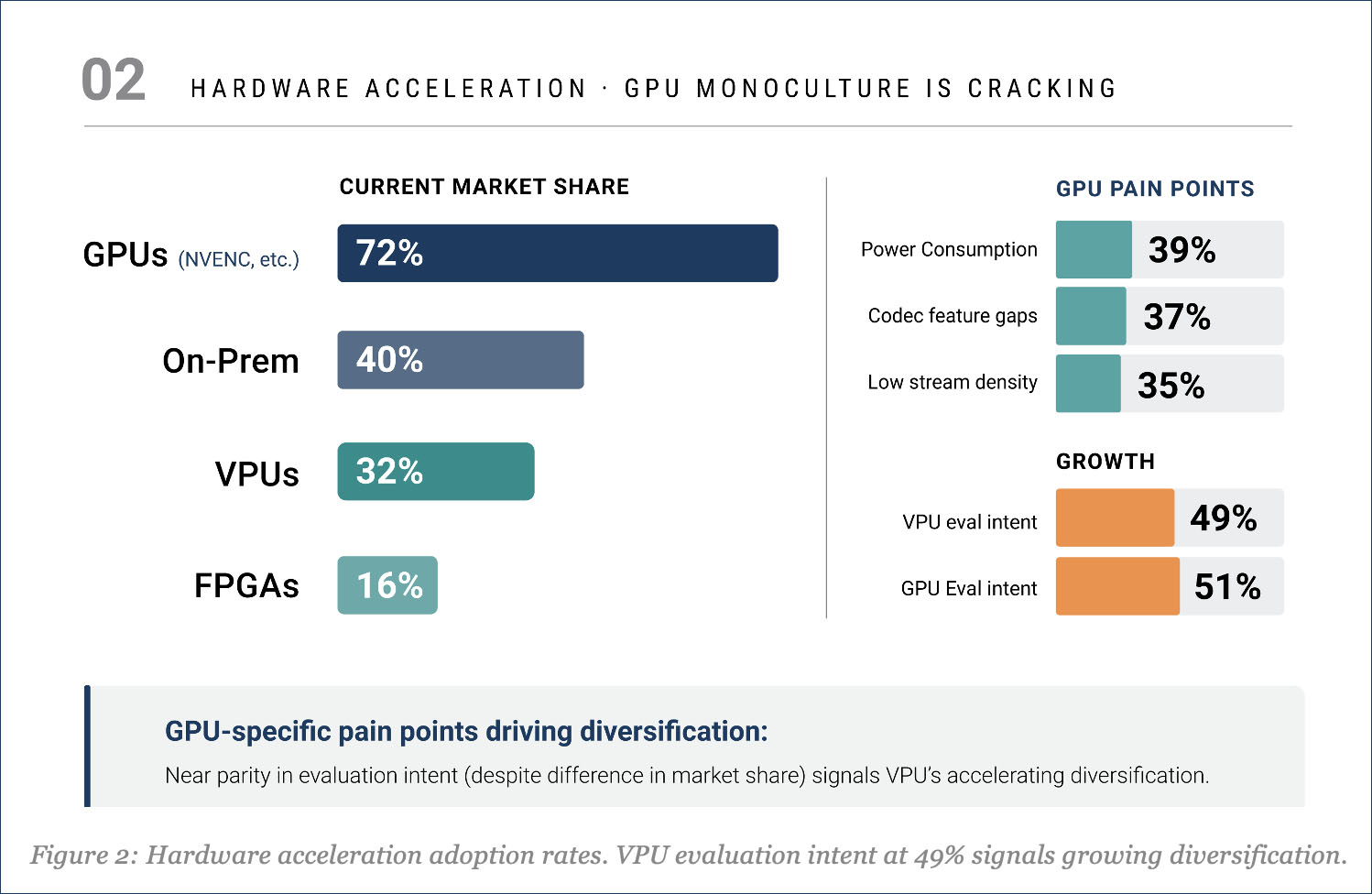

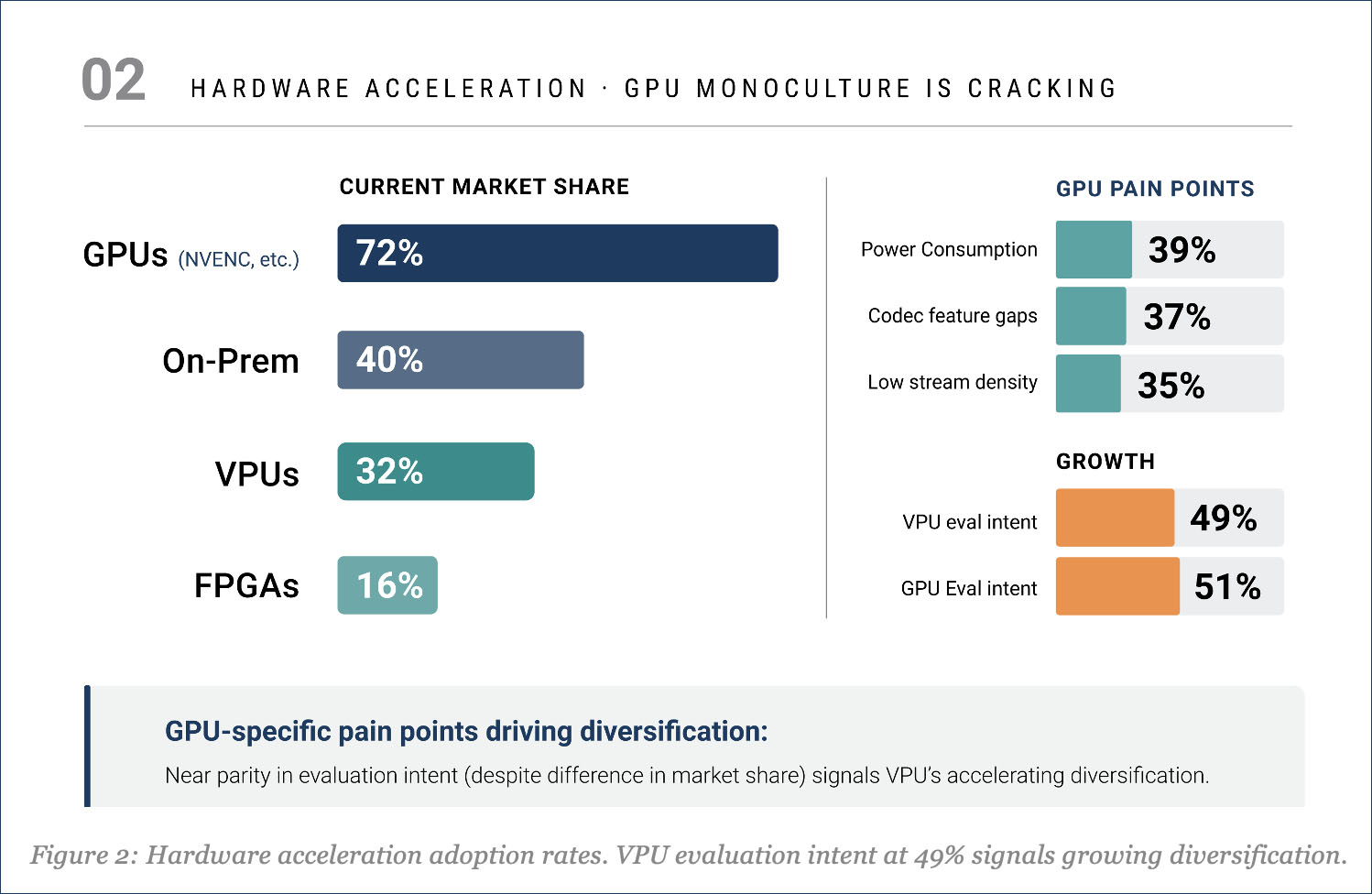

GPUs Dominate, But the Hardware Picture Is More Complicated Than It Appears

GPUs hold 72% of the hardware acceleration market share, driven primarily by NVIDIA’s NVENC ecosystem. That number is not surprising. What is more revealing is what is happening underneath it. Among organizations using hardware acceleration, 41% deploy multiple hardware types simultaneously—GPU plus VPU, GPU plus on-premises appliance, or broader combinations. This is not a one-size-fits-all market. Organizations are matching different hardware to different workload profiles, and the data suggests this trend is accelerating rather than consolidating.

GPU-specific pain points explain the hardware diversification. Power consumption leads at 39%, followed by codec/feature gaps at 37% and insufficient stream density at 35%. For live and high-density workloads, these are meaningful operational constraints. The report notes that VPU evaluation intent stands at 49%, nearly matching the 51% who plan to evaluate GPUs. Live and broadcast operators are 2.2 times more likely to adopt VPUs, driven by density economics and latency requirements that GPUs can struggle to meet at scale.

The primary encoding method split also tells a story of transition. Software/CPU-based encoding remains the single largest encoding architecture at 37%, while hardware-accelerated (30%) and hybrid approaches (32%) collectively account for 62% of the market. The era of pure software encoding is winding down for anyone operating at a meaningful scale.

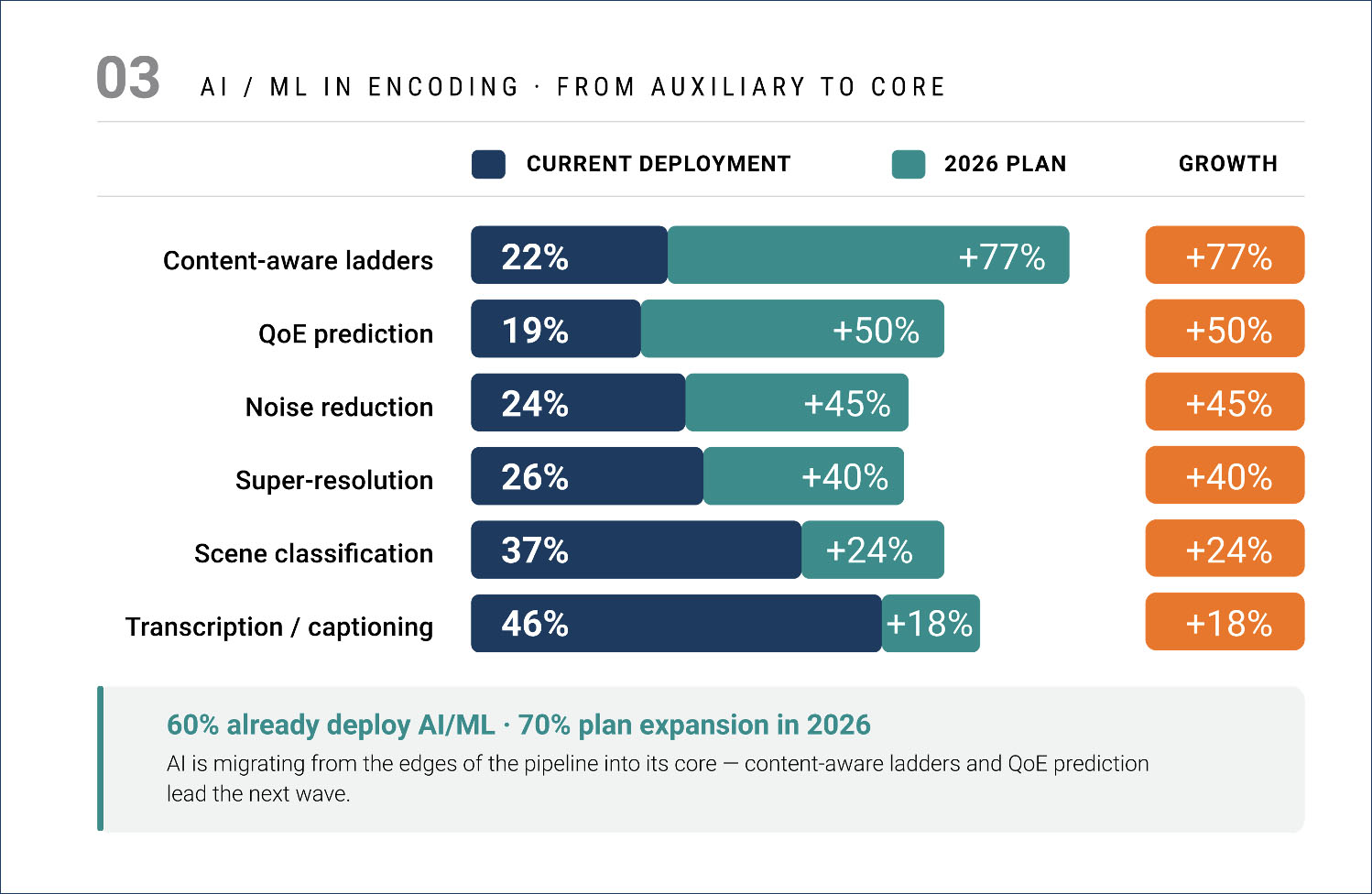

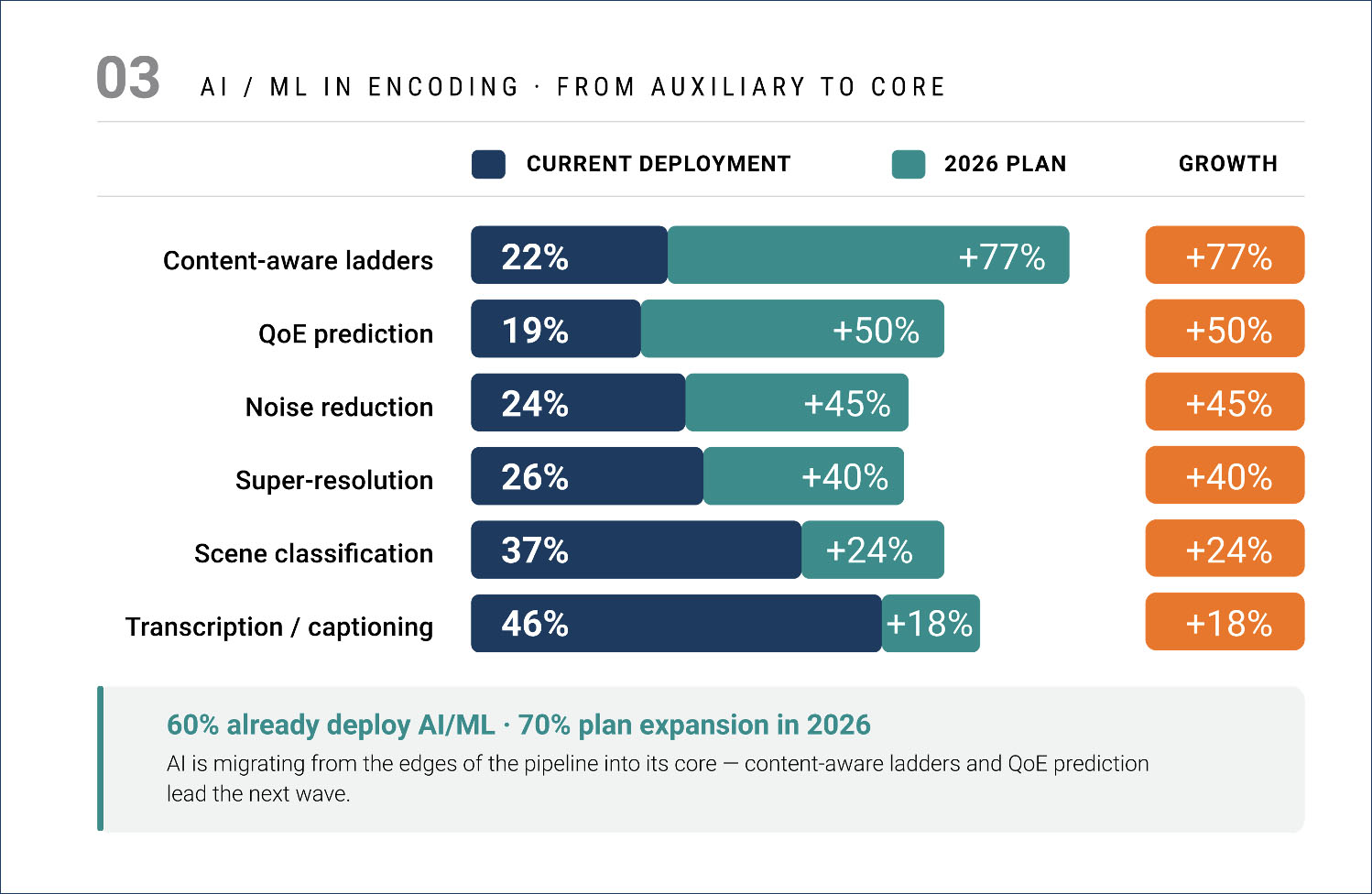

AI in Encoding Has Crossed the Mainstream Threshold

60% of respondents currently deploy AI/ML in at least one encoding workflow, and 70% plan to expand those capabilities in 2026. That is no longer experimental. The more interesting story is where AI is heading versus where it started. Current deployments concentrate on what I would call adjacent applications: transcription and captioning at 46%, scene classification at 37%, and super-resolution at 26%. These sit next to the encoding pipeline but do not fundamentally change how encoding decisions are made. The highest-growth applications tell a different story. Content-aware ladder generation shows the highest planned growth at 77%, followed by QoE prediction at 50%. These are core encoding intelligence functions—they sit inside the pipeline and directly influence bitrate allocation, resolution selection, and quality optimization.

This migration from auxiliary to core defines the AI trend in encoding for 2026. It also explains why AI expansion correlates with operational scale rather than company size. Organizations processing high VOD volumes are 2.3 times more likely to plan AI expansion regardless of employee count. GPU users are 2.4 times more likely, since GPU infrastructure provides the compute foundation for AI workloads. The implication for hardware vendors is clear: offering AI-ready compute alongside encoding capability could become a baseline expectation.

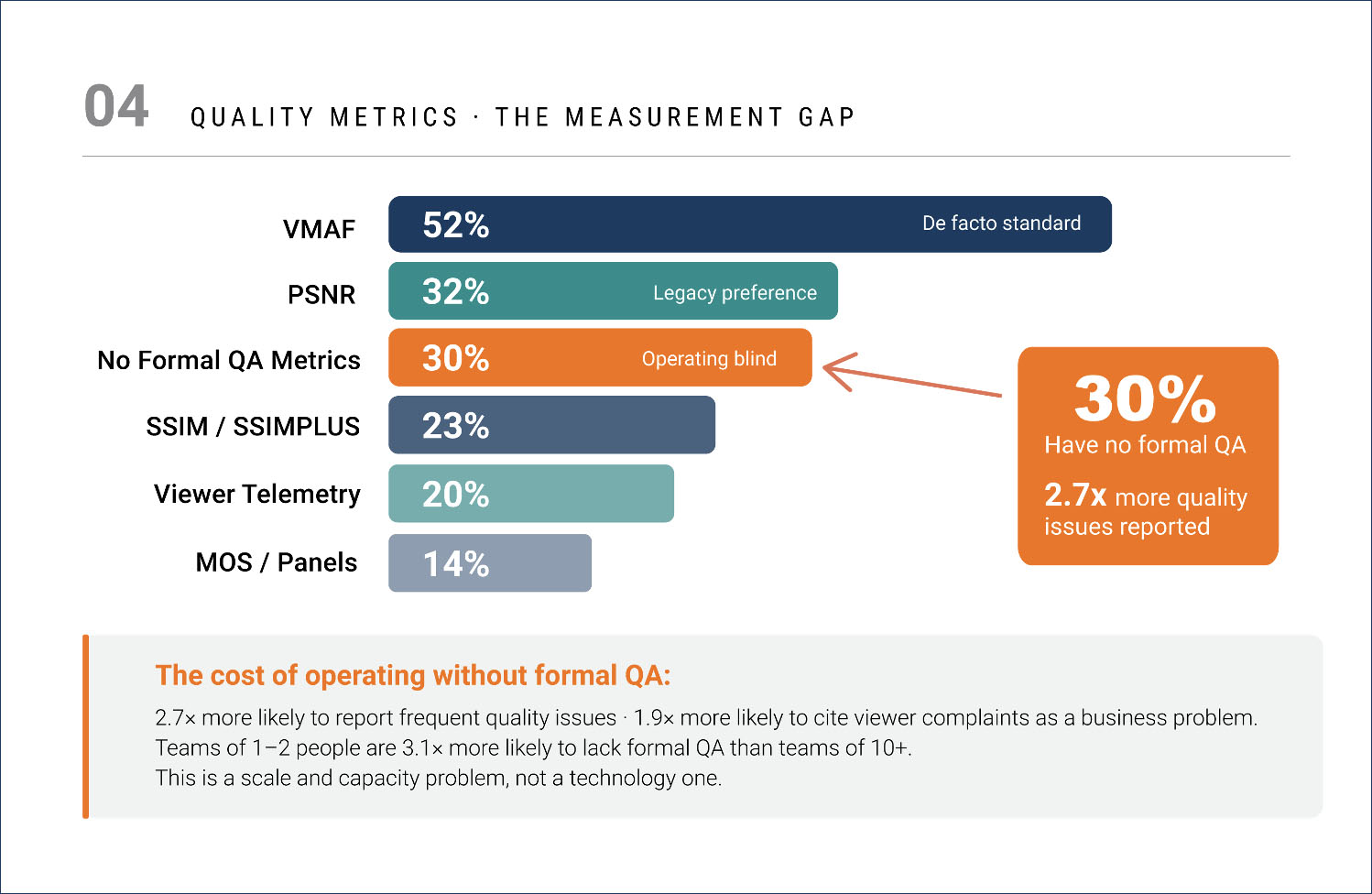

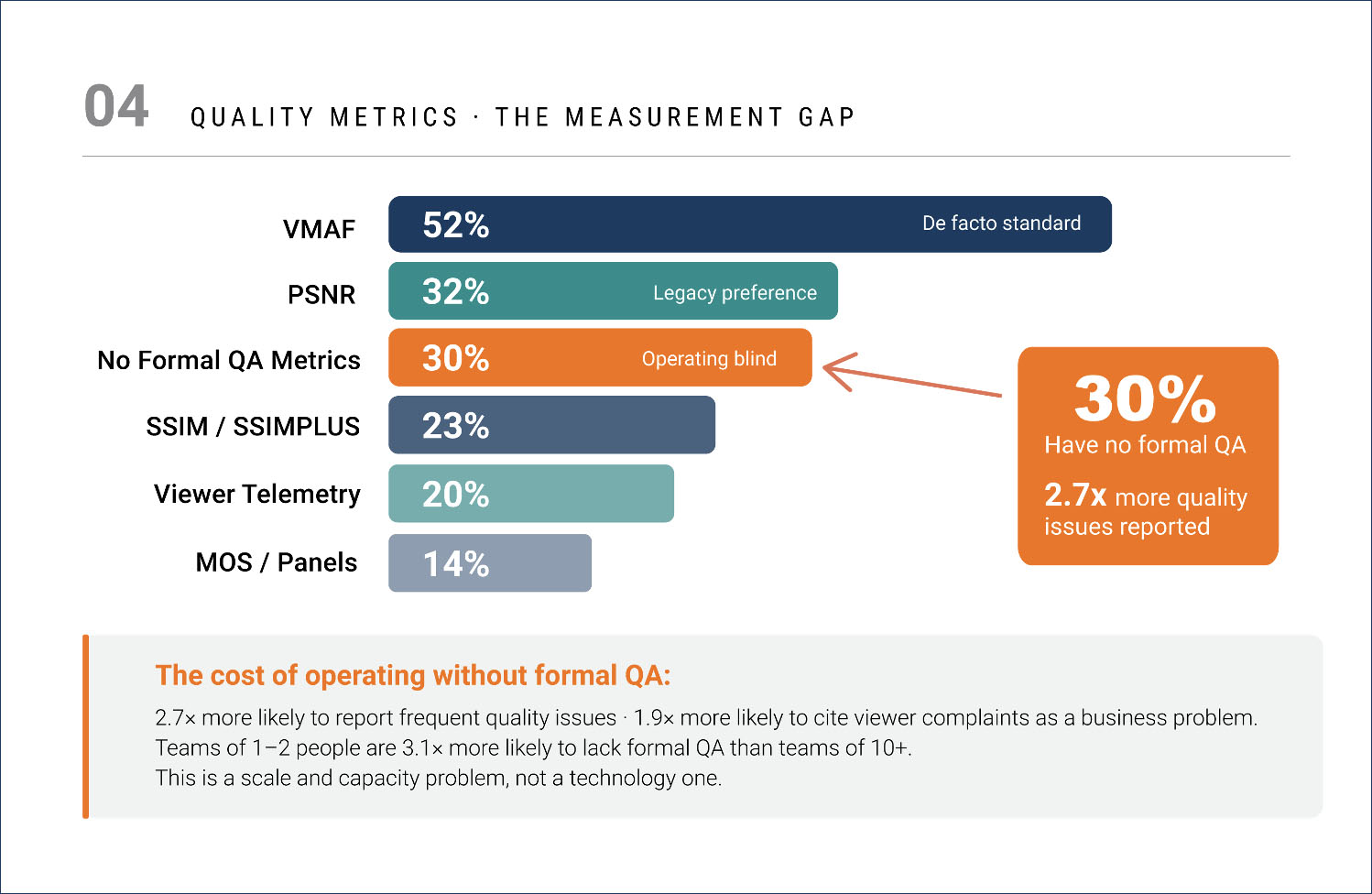

The Quality Measurement Gap Nobody Is Talking About

VMAF has crossed the 50% adoption threshold at 52%, establishing itself as the de facto objective quality metric for the encoding industry. PSNR remains at 32%, mostly due to legacy pipeline inertia rather than active preference. But here is the number I found interesting: 30% of respondents operate without any formal QA metrics.

These organizations are making encoding decisions such as codec selection, bitrate allocation, and hardware investment without systematic quality measurement. The report finds they are 2.7 times more likely to report frequent quality issues and 1.9 times more likely to cite viewer complaints as a business problem. That gap correlates strongly with team size: organizations with one to two-person video teams are 3.1 times more likely to lack formal QA than those with 10+ person teams.

This connects to a broader theme in the data. Content Adaptive Encoding (CAE) has nearly identical adoption across live (25%) and VOD (28%) workflows, which the report identifies as the clearest indicator of encoding sophistication, regardless of use case. Organizations using CAE for live workflows are 2.4 times more likely to also use AI/ML in their encoding pipeline. CAE adoption appears to serve as a gateway to broader encoding intelligence. For the 30% without formal QA, they are not just behind on measurement; they are likely missing the optimization wave reshaping streaming economics.

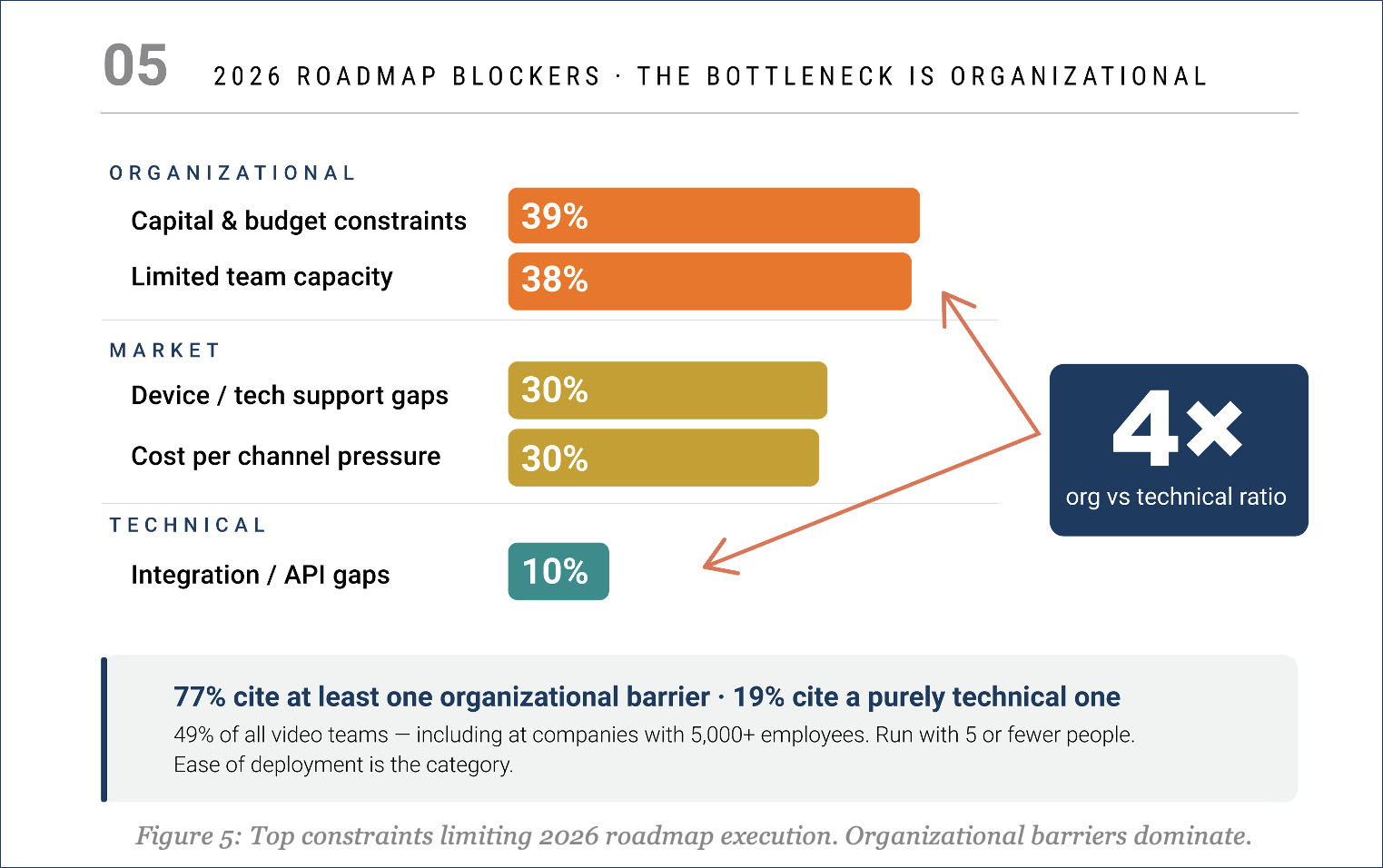

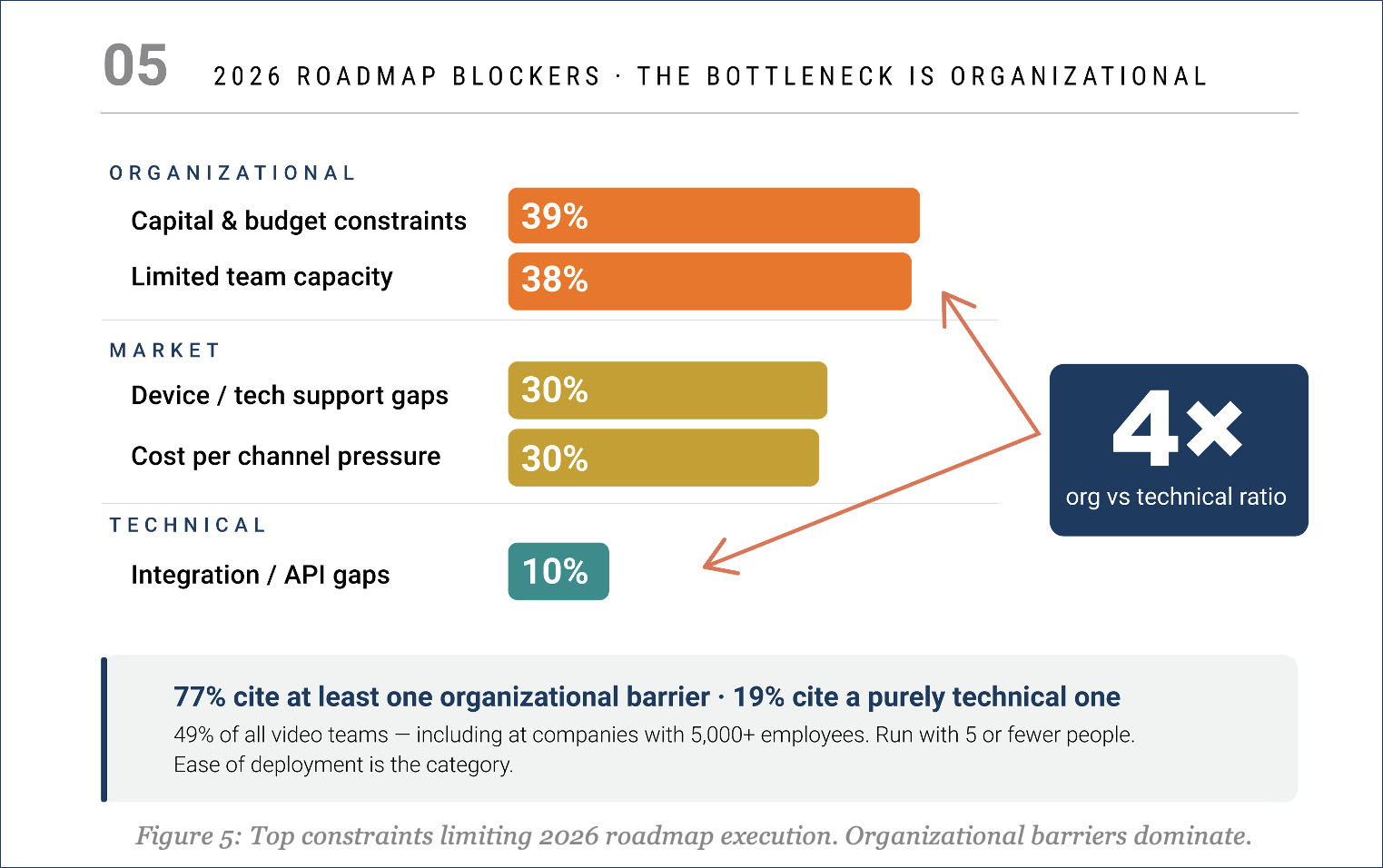

The Biggest Roadmap Blockers Are Not Technical

One of the more revealing sections of the report examines what is actually preventing organizations from executing their 2026 encoding roadmaps. The top constraints are capital and budget limitations (39%) and limited team capacity (38%). Device and technology support comes in at 30%, cost-per-channel pressure at 30%, and integration/API gaps at just 10%.

In other words, 77% of respondents cite at least one organizational barrier, compared to just 19% citing a purely technical one. This shows the bottleneck is not the technology. It is the budget to buy it and the team capacity to implement it. This matters because the data elsewhere in the report shows that 49% of all respondents have video engineering teams of 1 to 5 people. Even among companies with 5,000+ employees, small video teams are not uncommon. When the report says lean teams are the norm across all company size brackets, it has real implications for how vendors should think about integration complexity, time to value, and operational overhead.

Edge Encoding: High Interest, Low Conviction

42% of respondents expressed interest in deploying encoding at the edge, while 30% remain uncertain. This represents the largest undecided cohort on any topic in the survey. Only 29% said they are not interested at all. Among those interested, the top use cases are localized ladder generation (56%) and dynamic ad insertion (48%).

Edge interest follows a U-shaped curve by company size. Small companies (46%) and large enterprises (43%) show the highest interest, while mid-market firms lag at 26–28%. Interest also correlates with operational scale: 50% of organizations running 250+ live channels are interested, compared with 35% at smaller scales. The data suggests edge economics become more compelling as encoding volume increases, regardless of overall company size. But the 30% uncertainty band represents the biggest market education opportunity in the survey. These organizations see the potential but lack the information or confidence to commit.

Live event operators show the strongest interest in edge, at 44%, driven by latency requirements for real-time delivery. This aligns with what I have been hearing from operators at industry events—edge latency is becoming a competitive differentiator for sports and live entertainment. The report suggests that vendors who can reduce ambiguity through reference architectures, concrete use cases, and trial programs will convert undecided prospects faster than those who lead with product specs.

Who Responded and Why It Matters

Before drawing too many conclusions from any survey, it is worth understanding who completed it. Live events and sports broadcasting represent the single largest vertical at 35%, followed by subscription VOD at 18%, AVOD/FAST at 7%, and enterprise communications at 6%. Combined streaming verticals: live sports, SVOD, AVOD, and UGC represent 65% of the sample. EMEA leads geographically at 44%, followed by North America at 40%. The report is transparent about its limitations. Survey distribution through industry channels and NETINT’s network may overweight organizations already evaluating hardware encoding solutions. APAC (19%) and LATAM (16%) are underrepresented relative to their share of global streaming growth. These are fair caveats and worth keeping in mind when interpreting the data.

The combination of executive-level respondents with real budget authority and hands-on engineers with operational experience makes this one of the more credible encoding industry surveys I have reviewed. The fact that nearly half the respondents are VP-level or above means the stated priorities and investment plans are not aspirational wish lists from mid-level managers—they reflect where actual dollars are going.

The report runs more than 50 pages and covers codec adoption, hardware acceleration, AI/ML integration, quality measurement, cost structures, and organizational archetypes. The full report is available from NETINT here. It includes detailed archetype analysis of four distinct organizational segments, build-versus-buy economics, executive priority rankings, and projections. For a vendor-sponsored survey, NETINT deserves credit for producing something with analytical depth.

I’m often asked for a list of in-person conferences and events in the streaming media industry, so here’s an Excel sheet you can download listing nearly 50 events worldwide. For vendors wondering which events are the best fit, I’m happy to do a call with you and share my feedback on which events align with your objectives. Especially if you are new to a vendor’s marketing team, reach out to me. (dan@danrayburn.com)

I’m often asked for a list of in-person conferences and events in the streaming media industry, so here’s an Excel sheet you can download listing nearly 50 events worldwide. For vendors wondering which events are the best fit, I’m happy to do a call with you and share my feedback on which events align with your objectives. Especially if you are new to a vendor’s marketing team, reach out to me. (dan@danrayburn.com)

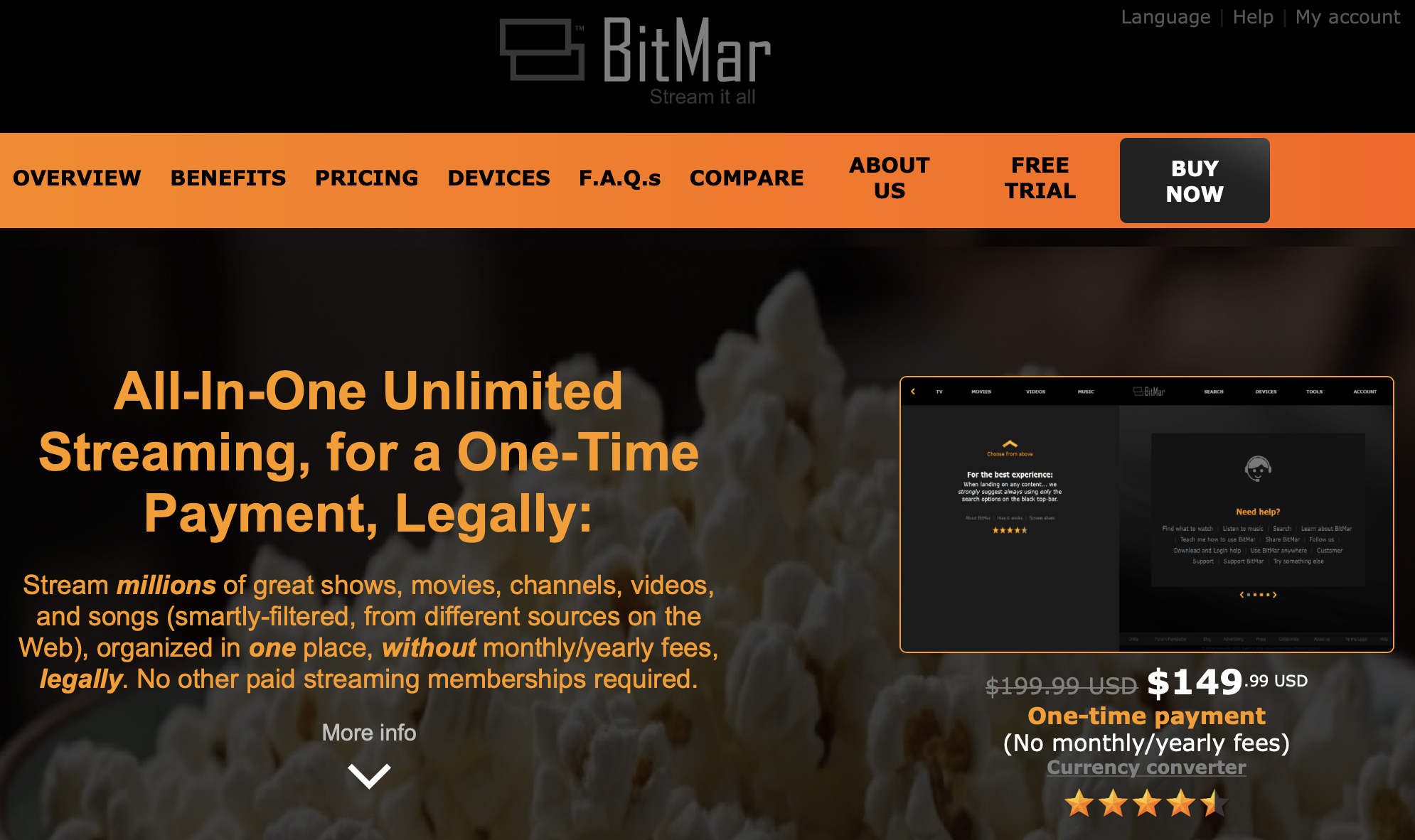

A company called BitMar, which promises to “stream everything legally,” is scamming people out of $150 by linking to free YouTube content via Bing search and Pluto TV. The CEO reached out, offering me a “personal lifetime membership” and hoping I would “inform my audience” about the service. I’ll be happy to inform them. Stay away from BitMar!

A company called BitMar, which promises to “stream everything legally,” is scamming people out of $150 by linking to free YouTube content via Bing search and Pluto TV. The CEO reached out, offering me a “personal lifetime membership” and hoping I would “inform my audience” about the service. I’ll be happy to inform them. Stay away from BitMar!